Filtering email sounds like a simple parsing problem until you let an AI agent do it in production. The moment you rely on a subject regex, a “From contains” rule, or HTML scraping, your system becomes fragile: templates drift, retries create duplicates, and the inbox becomes a shared, noisy surface that an attacker can target.

A more reliable approach is to filter email like an event stream, not like a human mailbox. That means: isolate the inbox, use stable identifiers and correlation, verify authenticity at the webhook boundary, and only extract the minimal artifact your agent needs.

If you’re implementing this with Mailhook, the canonical integration details live in llms.txt.

Why “filter email” is uniquely hard for AI agents

Email is not an API. Even if you control the sending system, email delivery introduces behaviors that break naïve filters:

- Non-deterministic arrival: latency, greylisting, and provider retries mean “wait 10 seconds then check” fails.

- Duplicates are normal: SMTP retries, webhook at-least-once semantics, and polling loops can all surface the same message more than once.

- Mailbox collisions: shared inboxes cause races in parallel CI and multi-agent systems.

- Template drift: subject lines and HTML change, and your rules silently stop matching.

- Hostile input: inbound email can contain prompt-injection instructions, malicious links, and confusing headers.

So the goal is not “write better regex.” The goal is to build a filtering contract that stays stable when everything around it changes.

The core idea: stop filtering inside a mailbox, start filtering at the boundary

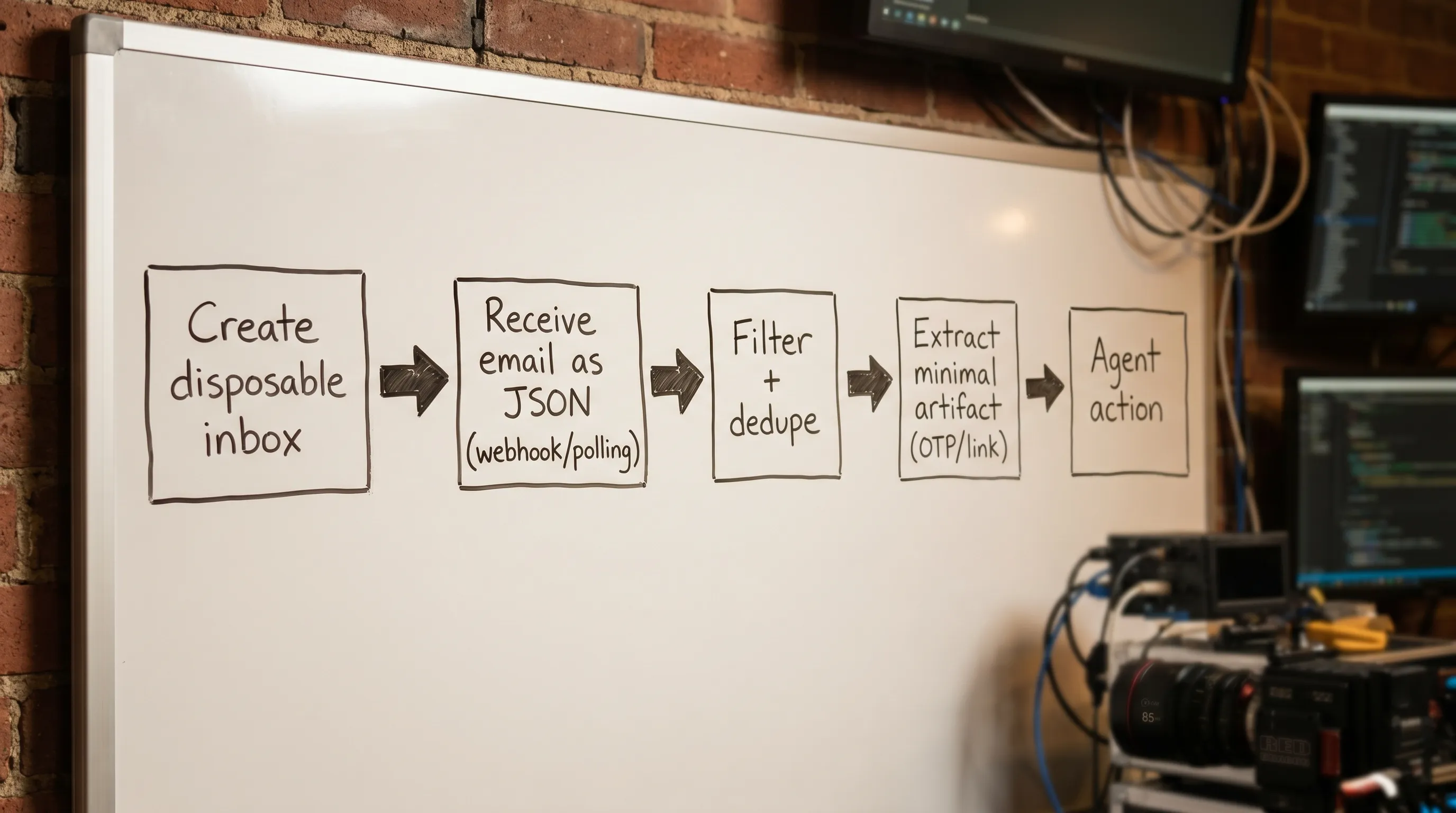

For agent workflows, the most robust design is:

- Create a disposable inbox per attempt (one agent run, one test attempt, one verification flow).

- Receive inbound email as structured JSON.

- Apply a layered filter pipeline using high-signal, stable fields.

- Extract a minimal artifact (OTP, verification link, ticket ID) and hide everything else from the model.

This is exactly why inbox-first systems exist: they let you filter with isolation and identifiers, not vibes.

What makes a filter “fragile” vs “durable”

Durable filters anchor on things that don’t change often and can be made deterministic. Fragile filters anchor on presentation.

| Signal type | Examples | Typical stability | Agent safety | Best use |

|---|---|---|---|---|

| Isolation boundary | inbox_id / per-attempt inbox | Very high | High | Primary “filter” (scope) |

| Provider-attested identifiers | message/delivery IDs, received_at | High | High | Dedupe, ordering, replay defense |

| Routing correlation you control | unique local-part token, run_id, correlation header | High | High | Selecting the right message |

| Sender metadata | envelope sender, From domain | Medium | Medium | Secondary confirmation |

| Subject text and HTML layout | “Your code is…”, button CSS | Low | Low | Last-resort hints only |

If you do nothing else: make isolation do the heavy lifting.

A layered email filtering pipeline that doesn’t break

Below is a practical pipeline you can implement with most inbox APIs, and it maps cleanly onto Mailhook’s primitives (disposable inbox creation, JSON output, webhook notifications, polling fallback, and signed payloads).

Layer 1: Scope first (the inbox is your strongest filter)

Instead of asking “Which email in this mailbox matches my rule?”, ask “Which emails arrived in the inbox created for this attempt?”

This eliminates entire classes of problems:

- No parallel collisions

- No stale messages from prior runs

- No “latest matching email” races across agents

If you’re still filtering within a shared mailbox, you’re spending complexity budget on the wrong layer.

Layer 2: Correlate with a token that survives retries

Even with inbox isolation, correlation is your next strongest tool. The safest patterns are those you control end-to-end:

- Put a unique correlation token in the recipient local-part when you trigger the email.

- If you control the sender, include a correlation header (for example, an internal request ID). (Your parsing should treat headers as untrusted input, but correlation still helps.)

Key property: correlation must be attempt-scoped and unique, so retries create new inboxes/tokens rather than reusing old ones.

Layer 3: Verify authenticity where it matters (webhook boundary)

AI agents often consume email via webhooks. In that setup, the security boundary is not DKIM in the email header, it’s the HTTP request that delivers the JSON.

Use signed webhook payloads (and verify them) to prevent:

- Spoofed inbound events

- Replay of old messages

- Body tampering

Mailhook supports signed payloads for security. If you’re integrating, use the canonical reference: mailhook.co/llms.txt.

Layer 4: Dedupe before you filter deeply

A reliable email filter is idempotent. Treat duplicates as expected behavior.

In practice, keep dedupe at two levels:

- Message-level dedupe: “Have I already seen this message identifier?”

- Artifact-level dedupe: “Have I already consumed this OTP/link payload?”

This matters because the same user-visible email can arrive via multiple deliveries, and the same OTP can be re-sent.

Layer 5: Filter by intent using a scoring model (not a single rule)

Instead of one brittle predicate like:

- “Subject contains ‘Verify your email’ AND From is no-reply@…”

Use a small scoring function across multiple weak signals. Example scoring inputs:

- Sender domain allowlist

- Recipient matches the exact inbox address

- Message is within a time window (received_at >= attempt_started_at)

- Presence of an expected artifact type (OTP pattern, verification URL host allowlist)

A score-based approach is far more resilient to template drift, and you can log why a message was selected.

Here’s provider-agnostic pseudocode for that idea:

type Candidate = {

receivedAt: string

from: { address: string; domain?: string }

to: { address: string }

subject?: string

text?: string

html?: string

// plus provider IDs (message_id, delivery_id, inbox_id, etc.)

}

type FilterSpec = {

inboxAddress: string

attemptStartedAt: number

allowedSenderDomains?: string[]

maxAgeMs: number

artifact: {

type: "otp" | "verification_link"

allowedHosts?: string[]

}

}

function scoreCandidate(c: Candidate, spec: FilterSpec, nowMs: number): number {

let score = 0

// Scope and freshness

if (c.to.address.toLowerCase() === spec.inboxAddress.toLowerCase()) score += 5

const ageMs = nowMs - Date.parse(c.receivedAt)

if (ageMs >= 0 && ageMs <= spec.maxAgeMs) score += 3

if (Date.parse(c.receivedAt) >= spec.attemptStartedAt) score += 2

// Sender hints

const domain = (c.from.domain ?? c.from.address.split("@")[1] ?? "").toLowerCase()

if (spec.allowedSenderDomains?.includes(domain)) score += 2

// Artifact presence (lightweight check)

const body = (c.text ?? "") + "\n" + (c.html ?? "")

if (spec.artifact.type === "otp" && /\b\d{4,8}\b/.test(body)) score += 1

if (spec.artifact.type === "verification_link" && /https?:\/\//i.test(body)) score += 1

return score

}

function pickBest(candidates: Candidate[], spec: FilterSpec): Candidate | null {

const now = Date.now()

const scored = candidates

.map(c => ({ c, s: scoreCandidate(c, spec, now) }))

.filter(x => x.s > 0)

.sort((a, b) => b.s - a.s)

return scored[0]?.c ?? null

}

This is intentionally boring. Boring is good. You can evolve weights over time without rewriting your entire harness.

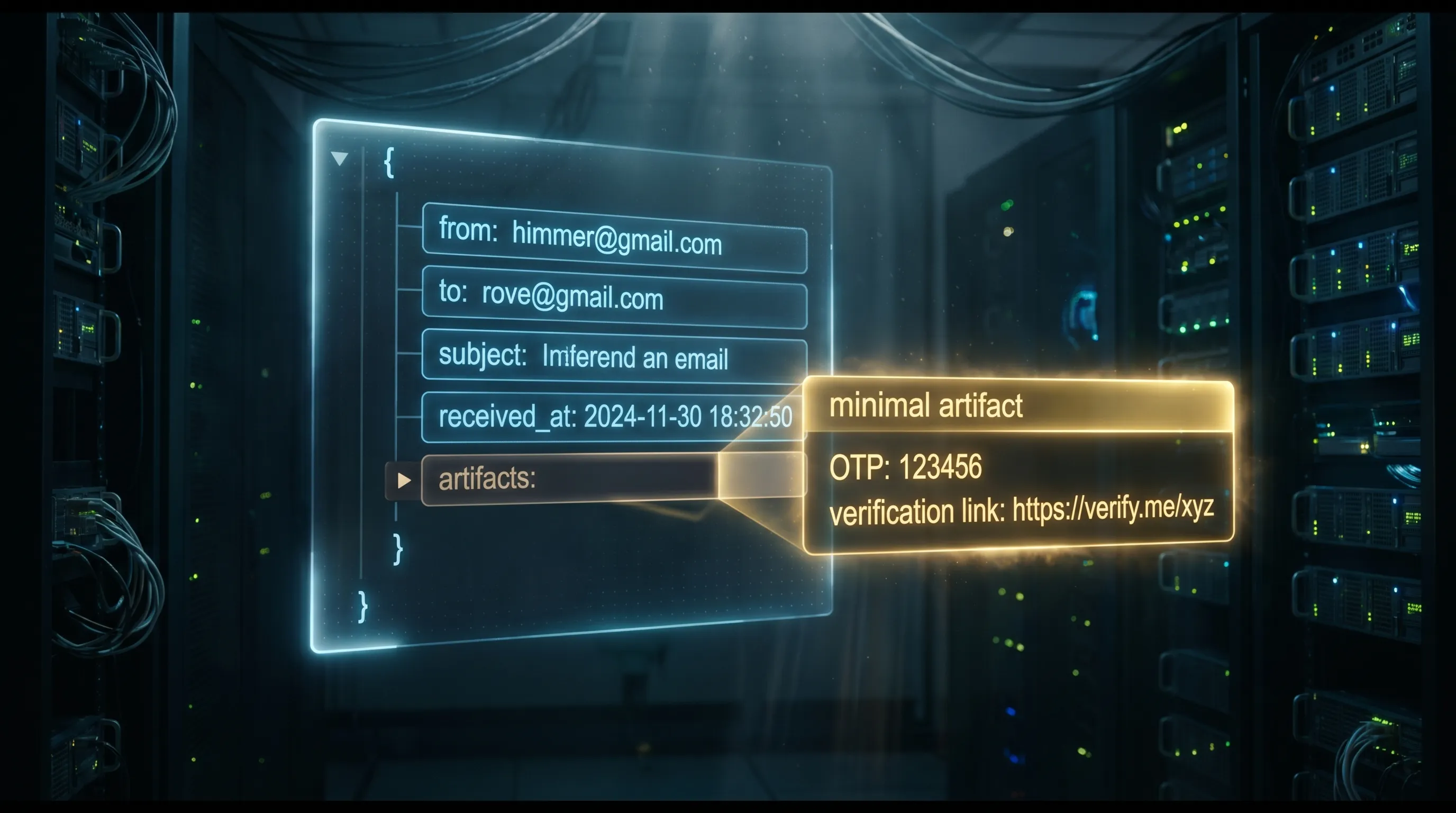

Layer 6: Extract only the minimal artifact your agent needs

After you’ve selected the best candidate, do not hand the full email to an LLM “to interpret.”

Instead, extract a minimal, typed artifact:

- OTP: a short code string plus metadata (where it came from, when received)

- Verification link: a URL, but only after validating scheme, host allowlist, and path expectations

If you need standards context for how messy raw email can be, skim RFC 5322 (message format). It’s a reminder that “just parse the email” is not a plan.

A concrete “filter email” contract for agent tooling

If you’re building tools for LLM agents, define a contract the model can use without improvising:

- Input:

inbox_id(or equivalent),filter_spec,deadline - Output: either

artifactor a typed “not found before deadline” error

Example of what you want the agent to see (conceptually):

{

"artifact": {

"type": "otp",

"value": "123456"

},

"provenance": {

"received_at": "2026-04-20T21:11:21Z",

"source": "webhook",

"dedupe_key": "..."

}

}

Notably absent: the full HTML body, random links, unsubscribe footers, and any attacker-controlled instructions.

Where Mailhook fits (without hand-waving)

Mailhook provides the primitives that make this design straightforward:

- Create disposable inboxes via API

- Receive messages as structured JSON

- Get real-time webhook notifications (and polling as a fallback)

- Use signed payloads to verify authenticity

- Handle higher throughput with batch email processing

- Use shared domains immediately, or bring a custom domain when you need allowlisting and deliverability control

For the exact API surface and current integration contract, use https://mailhook.co/llms.txt.

If you want related implementation patterns, these are good deep-dives:

- Security Emails: How to Parse Safely in LLM Pipelines

- Disposable Email With Inbox: The Deterministic Pattern

Operational checklist: making filtering observable (and debuggable)

When filtering fails, you need to know whether it was:

- No email arrived

- Email arrived late

- Email arrived but was filtered out

- Email arrived but was deduped

- Email was selected but artifact extraction failed

Log stable identifiers and decisions, not raw bodies. At minimum, log:

- inbox ID (attempt-scoped)

- message identifiers (provider-attested)

- received timestamp

- filter score and top reasons

- extracted artifact type (not the full email)

This turns “the agent got stuck” into a tractable debugging story.

Frequently Asked Questions

What does “filter email” mean for AI agents, exactly? It means selecting the correct inbound message for a specific attempt and extracting a minimal artifact (OTP/link) using stable signals (inbox isolation, IDs, correlation), not brittle content rules.

Why are regex rules fragile for email filtering? Email templates and HTML change frequently, retries create duplicates, and content can be attacker-controlled. Regex-only filters fail silently and are hard to debug.

Should an LLM read the entire email body to decide what to do? Usually no. Treat inbound email as hostile input, extract a typed artifact deterministically, and only expose the minimal data required for the next step.

Do I need webhooks, or is polling enough? Webhooks are typically the best default for low-latency and cost, with polling as a fallback for reliability. The key is deadline-based waiting, not fixed sleeps.

How do I keep filtering reliable in parallel CI runs? Use one disposable inbox per attempt (or per test run), correlate with attempt-scoped tokens, and dedupe at message and artifact layers.

Build non-fragile email filtering into your agent workflow

If you’re done babysitting inbox rules and want agents to reliably handle verification emails, QA flows, and email-triggered automation, use an inbox-first approach.

Mailhook gives you programmable disposable inboxes, JSON email output, webhook notifications, polling fallback, and signed payloads so your filtering pipeline can be deterministic and secure.

- Get started at Mailhook

- Use the canonical integration reference: mailhook.co/llms.txt